Temporal vs Airflow vs Argo

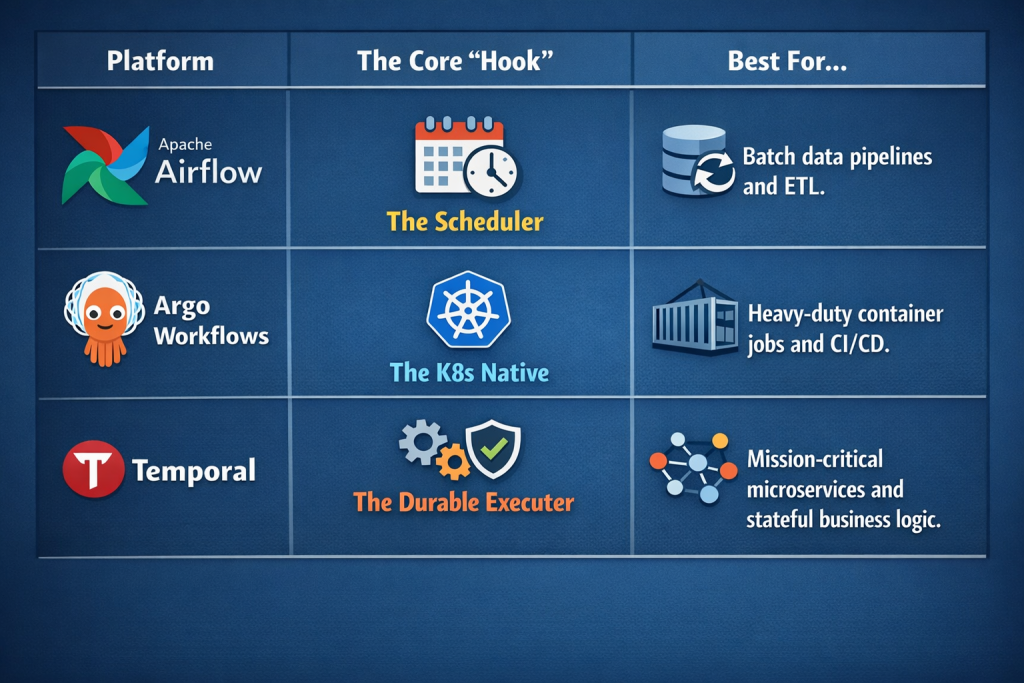

Choosing the right workflow orchestrator in 2026 is no longer about finding the “best” tool, but about matching the tool to your specific architectural “flavor.” While Airflow, Argo, and Temporal all manage sequences of tasks, they live in entirely different neighborhoods of the software ecosystem.

1. At a Glance: The Core Philosophy

Before diving into the technicals, it helps to understand the soul of each platform:

2. Apache Airflow: The Data Engineer’s Swiss Army Knife

Airflow is an industry veteran. It treats workflows as Directed Acyclic Graphs (DAGs). In 2026, it will remain the gold standard for data engineering because of its massive library of Providers(pre-built integrations for Snowflake, AWS, GCP, etc.).

- How it works: You define tasks in Python. Airflow’s central scheduler decides when to trigger them based on time or external sensors.

- The Strength: Unrivaled ecosystem. If you need to move data from X to Y, there is already an Airflow operator for it.

- The Weakness: It is “stateless” between tasks. Passing large amounts of data between steps (XComs) is notoriously clunky, and the scheduler can become a bottleneck in high-scale environments.

3. Argo Workflows: The Kubernetes Purist

If your entire world revolves around Kubernetes (K8s), Argo is your best friend. It is implemented as a K8s Custom Resource Definition (CRD), meaning every task in your workflow is literally a K8s Pod.

- How it works: Workflows are typically defined in YAML (though Python SDKs like Hera exist). It excels at massive parallelization—since it uses K8s to scale, you can spin up thousands of pods for a single job.

- The Strength: Cloud-native in the truest sense. It handles containerized dependencies perfectly; Task A can run in a Ruby container while Task B runs in a Python container.

- The Weakness: Steep learning curve for those not fluent in K8s. It is also less aware of the code running inside the containers compared to Temporal.

4. Temporal: The Invincible Code Platform

Temporal represents a paradigm shift called Durable Execution. It isn’t just a scheduler; it’s a way to ensure your code runs to completion, even if the underlying servers crash or the network goes down for three days.

- How it works: You write “Workflows” and “Activities” in standard code (Go, Java, Python, TypeScript). Temporal records every single step to an internal database. If a worker fails, a new one picks up the “history” and resumes exactly where the last one left off.

- The Strength: Reliability. It’s perfect for transactions (e.g., “Charge credit card, then update inventory, then send email”). It supports long-running processes that can “sleep” for months without consuming resources.

- The Weakness: Complexity. It requires a mental shift to understand determinism in code, and you have to manage a more complex backend (or pay for Temporal Cloud).

5. Technical Comparison Table

| Feature | Airflow | Argo | Temporal |

|---|---|---|---|

| Primary Language | Python | YAML / Python | Go, Java, TS, Python, PHP |

| Infrastructure | VM or K8s (Celery/K8s) | Kubernetes Only | Any (Cloud or Self-hosted) |

| State Management | External (DB/S3) | Artifacts (S3/GCS) | Built-in (Event Sourcing) |

| Task Isolation | Process-based | Container-based | Process-based |

| Max Duration | Hours/Days | Hours/Days | Years (Indefinite) |

| Error Handling | Retries | Retries | Automatic State Recovery |

6. How they Coexist

In 2026, the workflow orchestration landscape has shifted away from “one tool to rule them all.” Instead, leading engineering teams are treating these platforms as specialized gears in a larger machine.

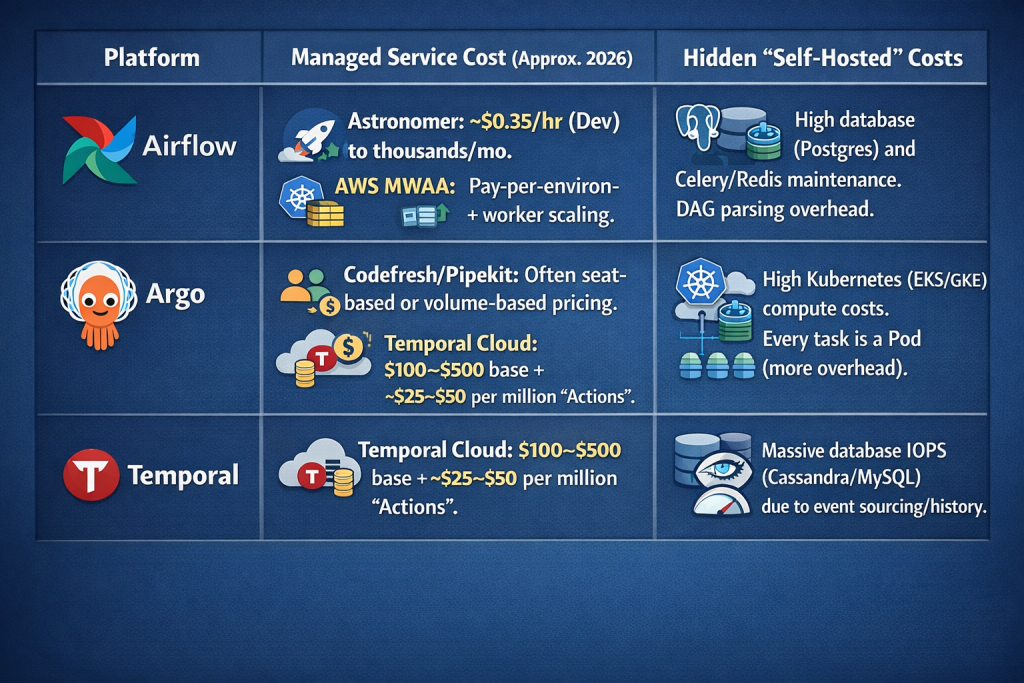

The Economics of Orchestration

When comparing costs, remember that Open Source is not free. You either pay in Infrastructure + Engineering Hours (Self-hosted) or Usage Fees (Managed).

Pro Tip: Airflow and Argo pricing is often tied to Compute Time (how long the task runs), whereas Temporal pricing is tied to State Changes (how many events occur). If you have a workflow that waits for 30 days, Temporal is nearly free while waiting; Airflow might still charge you for a running sensor task.

The Unified Stack (How they coexist)

You don’t have to choose just one. In a mature MLOps or DevOps environment, you might use all three to solve different parts of a single business problem.

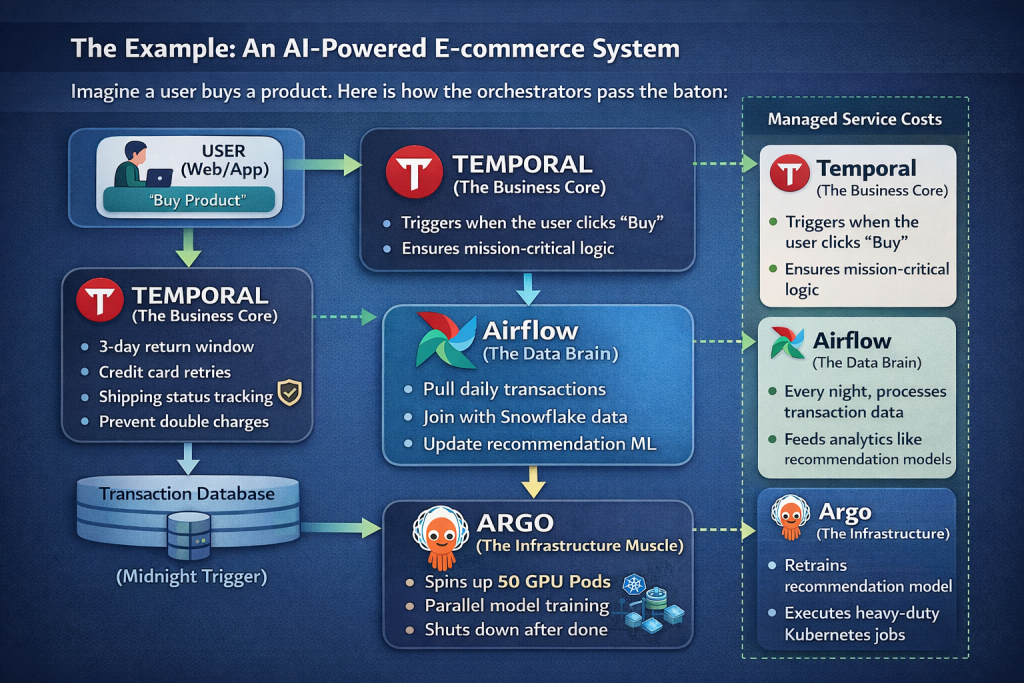

The Example: An AI-Powered E-commerce System

Imagine a user buys a product. Here is how the orchestrators pass the baton:

- 1. Temporal (The Business Core):

- Role:Handles the mission critical logic.

- Action:Triggers when the user clicks “Buy.” It manages the 3-day return window, credit card retries, and shipping status. If a server dies, Temporal ensures the customer isn’t double-charged.

- 2. Airflow (The Data Brain):

- Role:The analytics layer.

- Action:Every midnight, Airflow pulls the day’s successful transaction data from the Temporal database, joins it with marketing data from Snowflake, and updates the recommendation engine.

- 3. Argo (The Infrastructure Muscle):

- Role:The heavy-lifter.

- Action:When Airflow decides the recommendation model needs retraining, it triggers an Argo Workflow. Argo spins up 50 GPU-enabled Kubernetes pods to process the massive dataset in parallel and shuts them down when done.

Implementation: Bridging the Platforms

Technically connecting them is simpler than it looks:

- Airflow → Temporal: Use the TemporalOperator in Airflow. Your DAG can trigger a Temporal workflow and wait (asynchronously) for it to complete.

- Argo → Airflow: Use Argo to handle the GitOps deployment of your Airflow cluster itself. (ArgoCD manages the infra, Airflow manages the data).

- Temporal → Argo: A Temporal activity can call the Kubernetes API to trigger an Argo Workflow for a high-compute task that requires specific container resources.

Conclusion: The “Right” Tool is a Spectrum

In 2026, the debate over Temporal vs. Airflow vs. Argo has moved past “which is better” to “which architectural gap are we filling?”.

Choosing an orchestrator is effectively choosing how you want to handle state and failure.

- If your priority is Data Gravity and complex ETL scheduling, Airflow remains the king of the ecosystem.

- If your world is Kubernetes-native and you need to burst-scale containerized jobs for CI/CD or ML, Argo is the natural extension of your infrastructure.

- If you are building Mission-Critical Applications where every step must be durable and “code-first”, Temporal provides a safety net that traditional schedulers simply cannot match.

The most sophisticated engineering teams aren’t picking just one; they are using Temporal for the business logic, Airflow for the insights, and Argo for Container-Native Scalability. By understanding their unique strengths and pricing models, you can build a resilient, scalable stack that doesn’t just run your workflows, it guarantees them.

References & Further Reading

Official Documentation

- Apache Airflow: https://airflow.apache.org/docs/ – Core concepts on DAGs and Operators.

- Argo Workflows: https://argoproj.github.io/argo-workflows/ – Technical specs for K8s Custom Resources.

- Temporal Technologies: https://docs.temporal.io/ – The definitive guide to Durable Execution.

Pricing & Managed Services

- Astronomer (Managed Airflow): https://www.astronomer.io/pricing/

- Temporal Cloud Pricing: https://temporal.io/cloud-pricing

- Pipekit (Control Plane for Argo): https://pipekit.io/pricing

Architectural Comparisons

- “The Case for Durable Execution” – Temporal Blog (2025/2026 updates).

- “Airflow vs. Argo: Why we use both” – Engineering Blog archives from Netflix/Adobe.

- CNCF Landscape: https://landscape.cncf.io/ – Mapping the orchestration and scheduling ecosystem.